ChatGPT and generative artificial intelligence (AI) tools are evolving rapidly, fueling ongoing conversations and challenges within our VIU community. Our previous blog posts delved into the capabilities and constraints of AI, proposed strategies for responsible usage, and offered insights into integrating generative AI into course design and assessment. In this blog post, we’ll explore compelling questions about AI that have surfaced through discussions across VIU’s campuses.

How does ChatGPT and AI work?

AI mimics human interactions to produce various types of content, including text, imagery, audio and simulated data. ChatGPT, short for Chat Generative Pre-trained Transformer, is a prominent example of a large language model and a type of artificial intelligence. Large language models are trained to recognize and analyze patterns within pre-existing datasets gathered from the internet. ChatGPT generates responses based on the likelihood of specific word sequences. The November 2022 release of ChatGPT uses information from the web (up to 2021) to develop responses to prompts (Ramponi, 2022). ChatGPT continues to evolve through chat interactions.

What is VIU’s position on AI and ChatGPT?

VIU’s position statement can be accessed here: Academic Integrity and Generative Artificial Intelligence:

Context, Considerations, Emerging Best Practices.

GenAI tools have the potential to enhance learning when used appropriately. Faculty will continue to determine whether and to what degree they integrate technology into their teaching practice.

What are some limitations of AI and ChatGPT?

ChatGPT’s answers may be biased or false because it is based on data that might already have bias and errors. ChatGPT is improving by using information provided by researchers and developers, as well as feedback from users. This information may sometimes include mistakes, misunderstandings, opinions, and inaccuracies (Wu, 2022). Plus, the specific phrasing of the prompts impacts how AI responds (Squires, 2022). When using tools such as ChatGPT, we recommend critical review, fact-checking, and verifying outputs because ChatGPT cannot distinguish errors or adequately acknowledge sources of information (Ramponi, 2022).

How can AI tools be used effectively for teaching and learning?

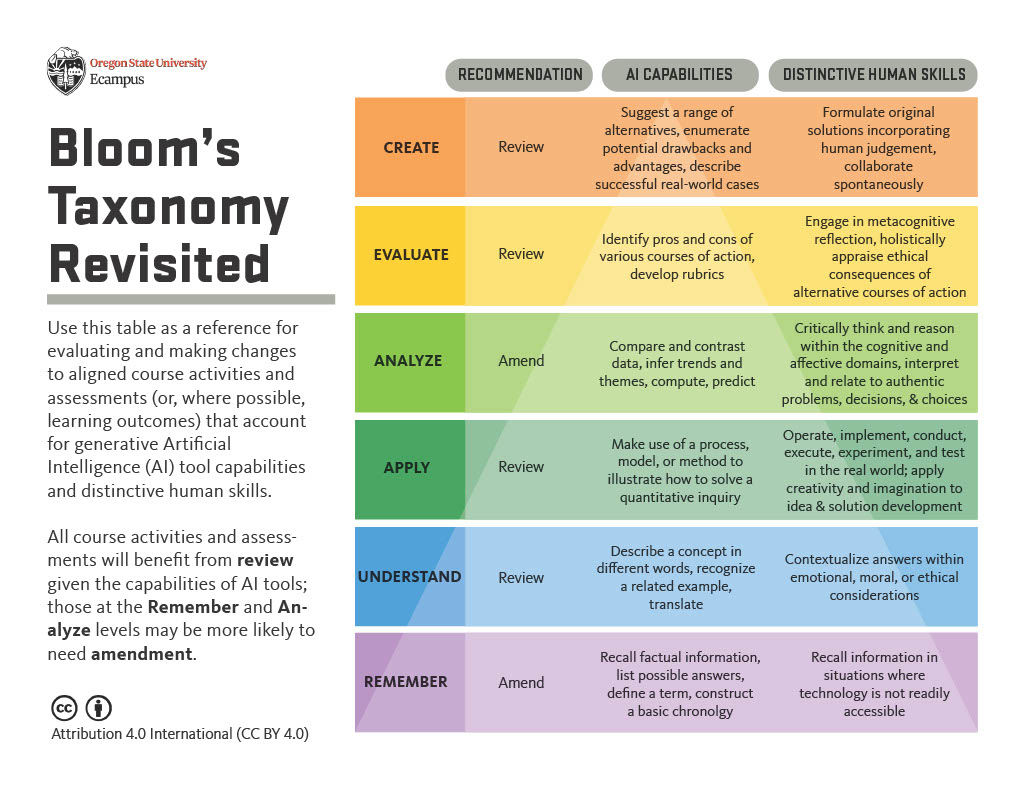

Integrating AI into teaching can be valuable, especially since many students will likely explore AI tools on their own. Discussing AI in a subject-specific context can help students think carefully about AI and its potential societal effects, such as accuracy, privacy, security, and bias. Activities or assessments that require learners to analyze, improve, or critically evaluate text or code can help develop students’ higher-order skills.

For instructors, AI tools like ChatGPT offer features to support instructional planning and content creation. For instance, ChatGPT can assist in generating ideas, planning, and drafting instructional materials, including topics, outlines, lesson plans, content, questions, and other educational resources. Go to the Generative AI and Assessment blog for strategies to harness AI.

May I use AI to grade student work?

Students’ original work is their intellectual property. This means instructors can’t put a student’s work into an AI tool that stores it as part of its data without gathering informed consent from the student. However, some AI tools can support grading, for example, by helping to develop rubrics or grading criteria.

What is informed consent?

When presenting students with any third-party tool (including AI tools) that will involve the submission or collection of student data, instructors must disclose what the tool is, why it is being used, what data is collected through using the tool and how this data will be used. Students then have the option to provide informed consent, or they can choose to opt out of their data being collected. In the latter case, you are not allowed to submit student data to the tool in question.

How can I discourage students from misusing AI?

How you use these AI tools will vary based on your subject, teaching approach, and what you want students to achieve. Instructors should clearly state in their course outlines and assignment instructions whether they allow or exclude using AI for specific activities. The CIEL blog provides resources to support you in setting course expectations.

Discipline-specific AI discussions can make students think deeply about AI’s effects on society, like its accuracy, privacy, security, and bias. Assignments that involve analyzing or improving chatbot-generated text or code can help students develop advanced skills. Encourage students to continually critically assess the responses generated by the tools and check factual claims against reliable sources. Additionally, assessments can be designed to discourage and prevent the inappropriate use of AI. Refer to the CIEL Generative AI and Assessment blog for strategies.

The use of AI detectors is discouraged because they are generally unreliable. Many plagiarism detectors submit content to their databases. However, since students own the intellectual property rights to their original work, instructors cannot upload a student’s original work to these detectors without gathering informed consent from the student.

Can I require students to use ChatGPT?

Various AI platforms like ChatGPT store the details you provide when making an account, like your name, phone number, email, and payment information (if it’s a paid account) — students cannot be required to create an account. AI platforms might also keep records of all the messages you send to help improve the system. Sharing sensitive or private data should be avoided.

Students may have privacy or intellectual property concerns about uploading their original work to an AI tool since this will add the work to the tool’s data set. If you design an assignment that involves students uploading their original work to an AI tool, you must provide alternative ways to complete it. One option is to require students to use ChatGPT content provided by the instructor instead of asking all students to sign up for this tool. OpenAI’s commonly asked questions page has more information on the company’s policies regarding ChatGPT.

How are workplaces using these AI tools, and how do we prepare students to be technologically proficient with them?

To prepare students for real-world learning and future work, we encourage you to clearly define how your discipline is using AI and identify domain-specific best practices. AI has a wide array of applications that can streamline tasks and enhance productivity across various industries and professions. Some use cases for AI include; enhancing customer service, translating languages, generating content for product descriptions and marketing, summarizing complex information, and providing starting points for presentations (Silkin, 2023).

As educators, we can lead by example by using these technologies appropriately in our teaching and assessments. We can also demonstrate good digital behaviour and responsible use. Specifically, we should educate about the potential for bias and misinformation when using these tools. Ultimately, we should collaborate with technology, teaching students how to ask questions and refine answers to make the most of these tools for better results.

Where can I learn more about ChatGPT and generative AI tools for teaching and learning?

Join us at our upcoming CIEL mini-conference on Gen AI Learning & Teaching on October 26. This conference will provide a platform to engage in in-depth discussions, share insights, and explore the latest developments in generative AI. We look forward to your active participation, questions, and the opportunity to enhance our community’s understanding of GenAI. In the meantime, below are some recommended resources about ChatGPT and AI in education.

Additional Supporting Resources

- 10-minute chats on Generative AI. This is a series of 10-minute conversations about Gen AI hosted by Tim Fawns, associate professor at Monash University, Australia.

- ChatGPT & Education (Trust, 2023) is a Google document that describes what ChatGPT can do, what you need to know about ChatGPT, and how educators can use it.

- AI Readings by the University of Toronto provides an extensive resource page about artificial intelligence in teaching and learning.

- Introducing ChatGPT suggests methods, limitations, and related research.

- How AI can be used meaningfully by teachers and students in 2023 – Teaching@Sydney shares some ideas about how we might productively engage with ChatGPT.

References

Lawton, G. (2023). What is generative AI? Everything you need to know. TechTarget.

Ramponi, M. (2022, December 23). How ChatGPT actually works. AssemblyAI.

Silkin, L. (2023). Generative AI and the Workplace. Future of Work Hub.

Squires, A. (2023, January). Developing Topics with Chat GPT. [PowerPoint slides]. Avila University Writing Center.

Wu, G. (2022, December 22). 5 Big Problems With OpenAI’s ChatGPT. MakeUseOf.